Silicon Masterminds: The Ultimate Brain-of-the-Machine Gauntlet

Think you actually know what happens under that shiny laptop shell when you hit the power button? This quiz puts every rumor, half-remembered fact, and tech myth about central processing units to the test. From microscopic transistors switching at dizzying speeds to instruction sets that decide which software even runs, each question peels back another layer of the silicon story. You will explore how cores cooperate, why caches matter more than raw clock numbers, and what really separates yesterday’s chips from today’s powerhouse designs. Expect tricksy terminology, historic milestones, and performance puzzles that reward real understanding instead of buzzwords. Whether you are a hardware hobbyist, a programmer, or just the curious friend who fixes everyone’s computer, this challenge aims to stretch what you think you know about the beating heart of modern machines.

1

What is instruction pipelining designed to improve in a processor?

2

Which of the following best describes hyper-threading or simultaneous multithreading (SMT)?

3

Why did the industry largely shift focus from simply increasing clock speeds to adding more cores and improving efficiency?

4

Which level of on-chip memory is usually the smallest and fastest, sitting closest to the execution units?

5

In modern desktop chips, what is the main purpose of having multiple cores on a single processor die?

6

Which component inside a processor is primarily responsible for performing arithmetic and logical operations?

7

In a typical modern system, which component communicates directly with the processor over the fastest dedicated interface to provide data and instructions?

8

Which term refers to the smallest unit of data that a processor can directly handle as a single chunk, often 32-bit or 64-bit wide?

9

What does the clock speed of a processor, typically measured in gigahertz (GHz), actually represent?

10

What is the primary role of the control unit inside a processor?

0

out of 10

Quiz Complete!

Inside the CPU: The Beating Heart of Modern Machines

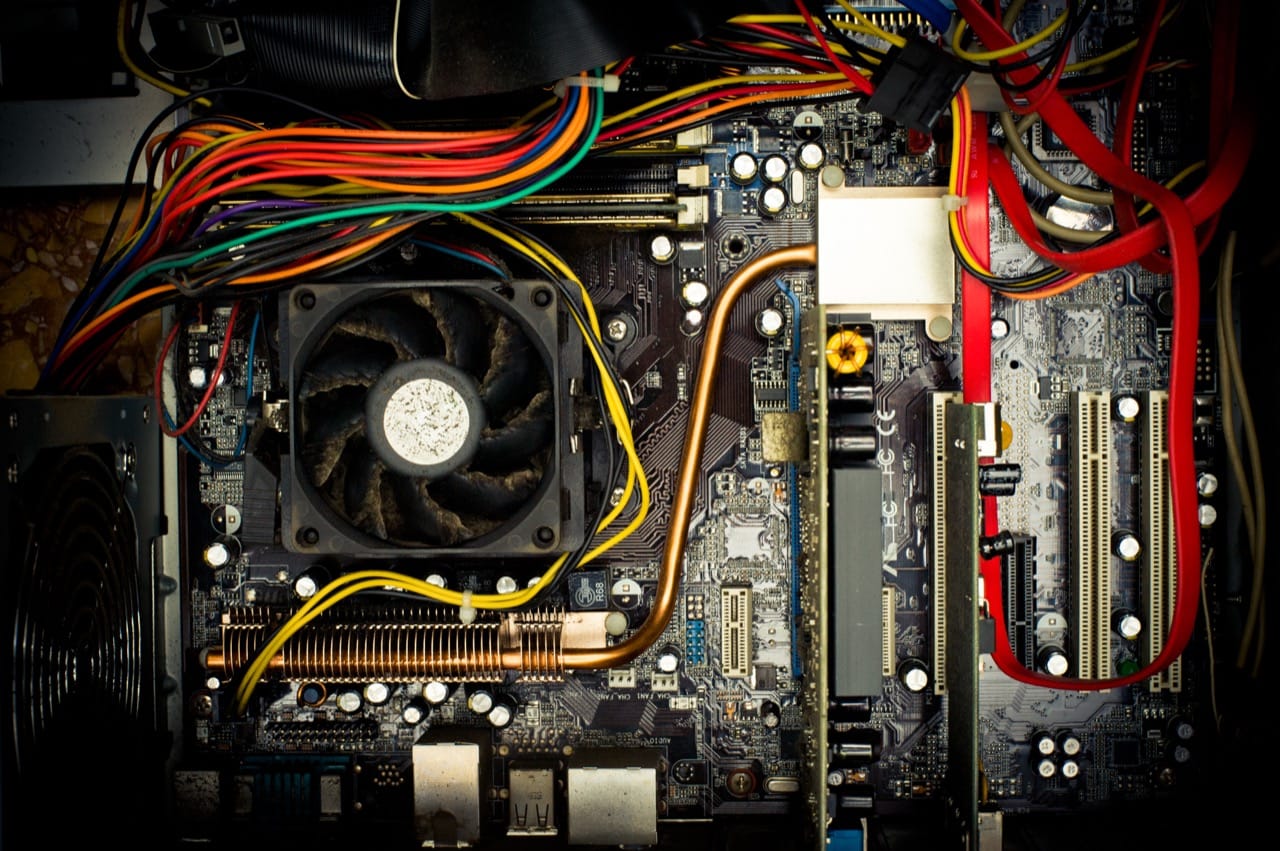

Every time you press the power button on a computer, a complex chain of events begins inside the central processing unit, or CPU. Often called the brain of the machine, the CPU is where instructions are interpreted, calculations are performed, and decisions are made at incredible speed. Yet for most people, what happens under that shiny laptop shell is a mystery filled with buzzwords and half-truths.

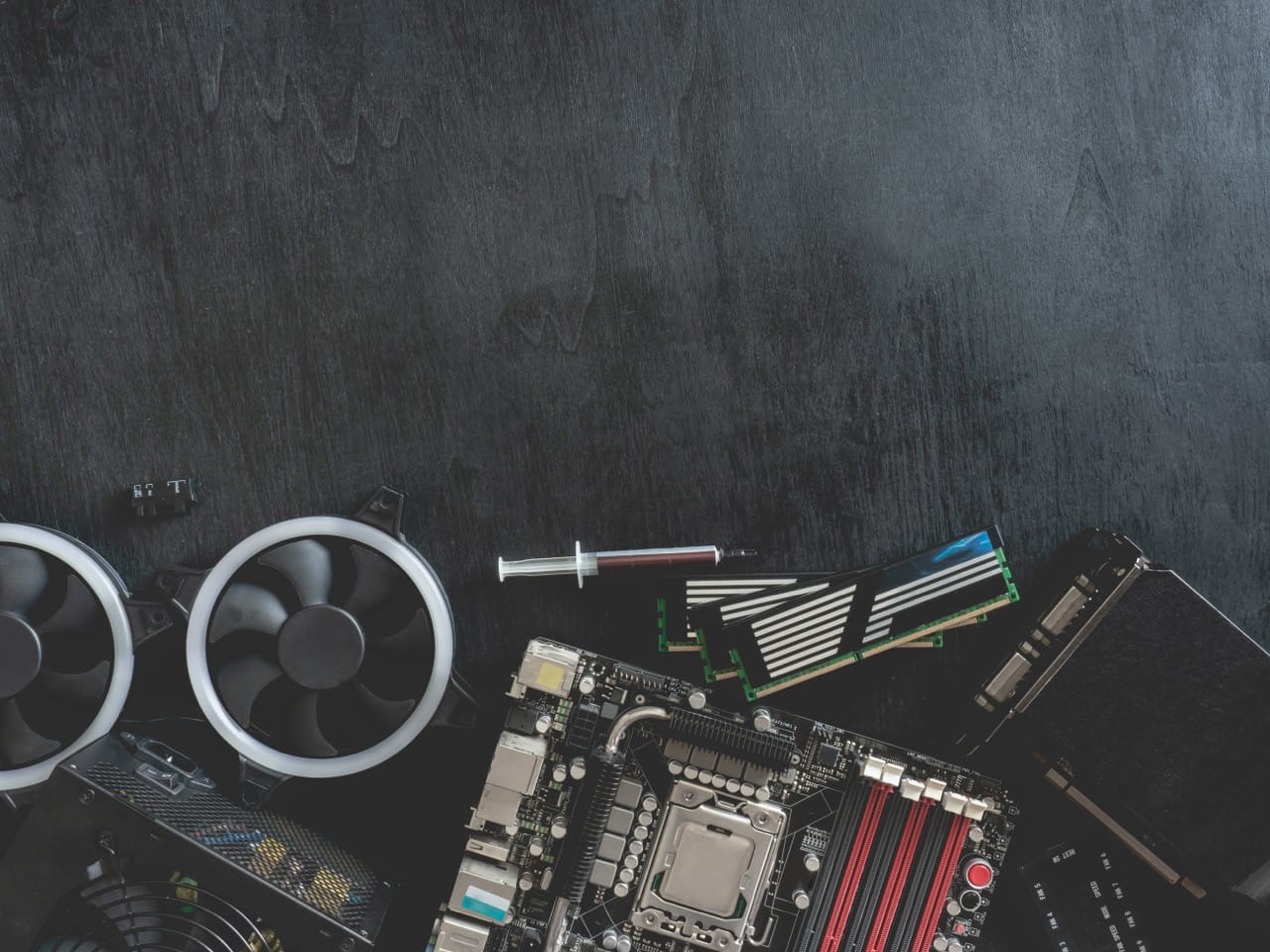

At its core, a CPU is built from microscopic transistors, tiny electronic switches that can turn on and off billions of times per second. These switches form logic gates, which combine to create circuits capable of adding numbers, comparing values, and following logical rules. Modern processors pack billions of transistors onto a chip no larger than a postage stamp, allowing them to handle huge amounts of work in the blink of an eye.

When your system starts, a small program stored in firmware, often called the BIOS or UEFI, tells the CPU what to do first. The processor begins fetching instructions from memory, decoding what they mean, and executing them in order. This cycle of fetch, decode, execute, and write back happens continuously, forming the basic rhythm of computing. Every app you use, from a web browser to a game, ultimately boils down to streams of instructions that the CPU follows.

One of the most important ideas in modern CPUs is the concept of multiple cores. Early personal computers had a single core, meaning the CPU could only execute one instruction stream at a time. Today, even budget laptops often have four or more cores. Each core can work on its own tasks or help split a larger job into pieces. This is why a system can play music, download files, and run a video call all at the same time. However, more cores do not always mean a faster experience. Software has to be designed to take advantage of them, and other parts of the system, like memory and storage, can become bottlenecks.

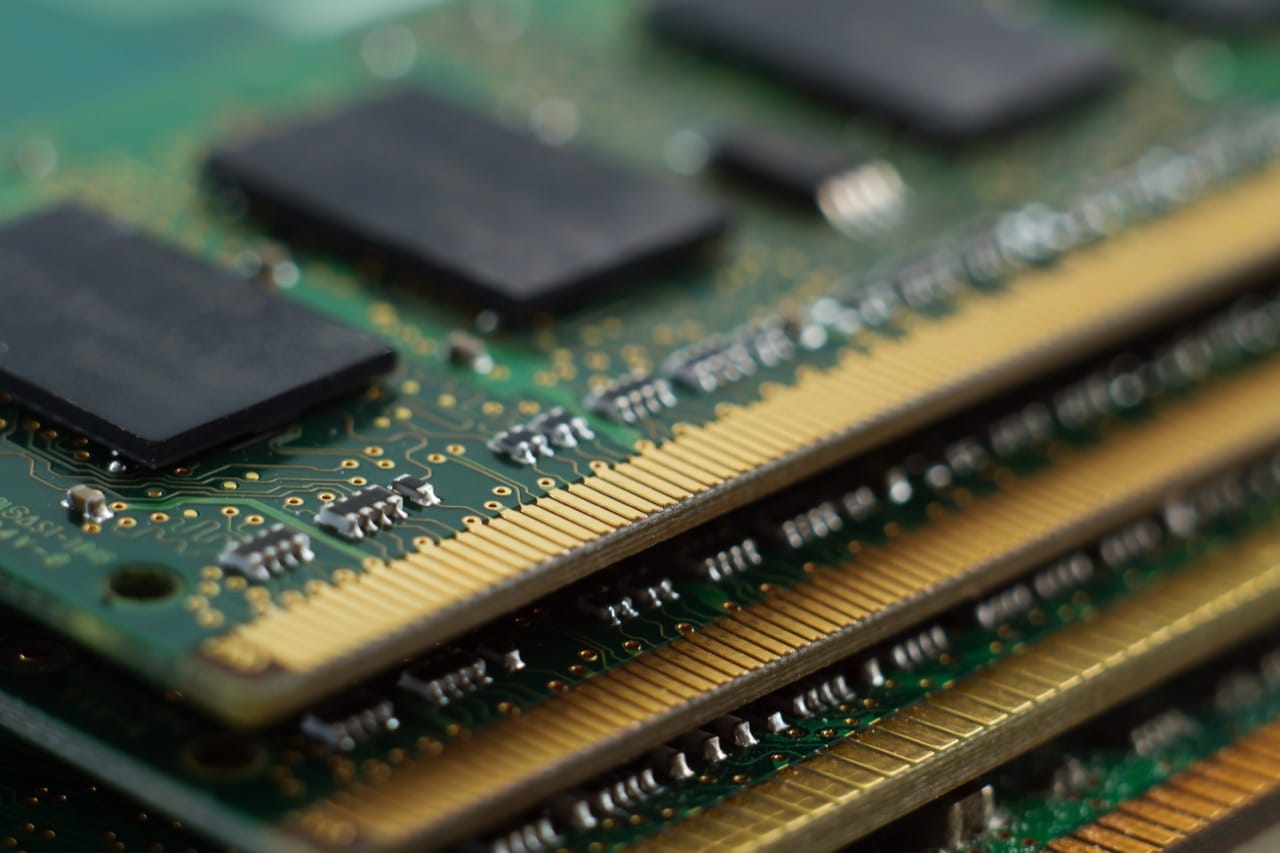

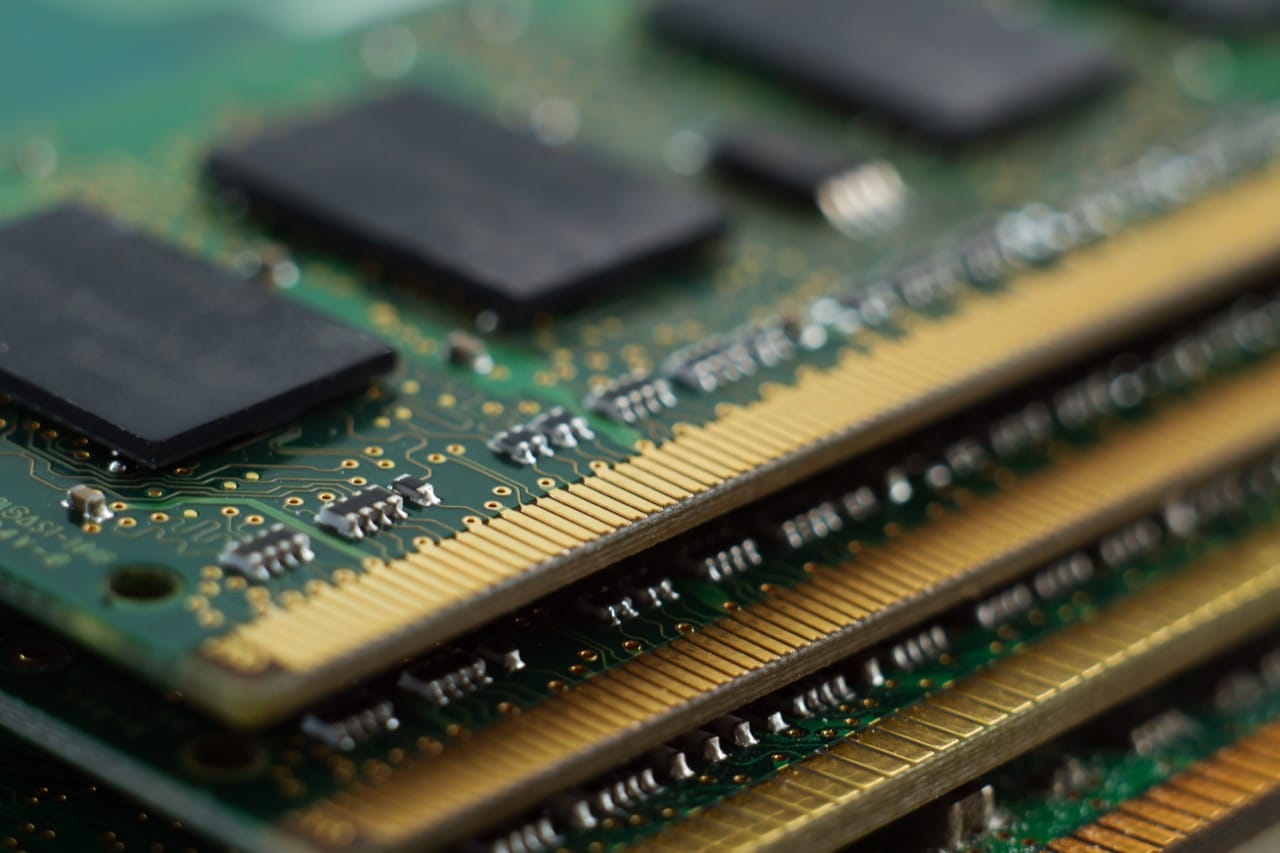

Another key feature is the CPU cache, a small but extremely fast memory built directly into the processor. Accessing main system memory is relatively slow compared to how fast a CPU can operate. To avoid constantly waiting, the processor keeps recent and frequently used data in its caches. There are usually several levels of cache, labeled L1, L2, and sometimes L3, each larger but slightly slower than the one before it. In real-world use, an efficient cache system often improves performance more than simply raising the clock speed.

Clock speed, measured in gigahertz, describes how many cycles per second the CPU can perform. It is tempting to treat this number as a simple score for speed, but that can be misleading. Different CPU designs can do more or less work per clock cycle. A newer processor at a lower clock speed can still outperform an older one with a higher number, thanks to improvements in architecture, better branch prediction, wider pipelines, and smarter execution units.

Instruction sets are another important piece of the story. An instruction set defines the basic operations a CPU understands, such as adding numbers, jumping to another part of a program, or loading data from memory. Families like x86, x86 64, and ARM each have their own instruction sets. Software must be compiled for the correct one, which is why a program built for a phone may not run on a desktop without translation or emulation.

Over time, CPUs have evolved from simple, single core chips to complex systems that include integrated graphics, power management, and specialized acceleration features. Despite this complexity, the core idea remains the same: follow instructions, move data, and make decisions as efficiently as possible. Understanding how cores, caches, and instruction sets work together turns the CPU from a mysterious black box into a fascinating, layered machine at the heart of every digital experience.