From Abacus to App Store Brain Teaser

Quiz Complete!

From Abacus to App Store: How Computing Grew Up

Long before anyone carried a computer in a pocket, people were already trying to offload mental work onto tools. The abacus, used in various forms for thousands of years, is a reminder that computing began as organized counting. What changed over time was not the desire to calculate, but the ambition to automate rules and processes so that a device could follow steps reliably, even for problems too long or tedious for a person.

In the 1600s, mechanical calculators appeared that could add and subtract using gears and dials. Blaise Pascal built a machine to help his tax-collector father, and Gottfried Wilhelm Leibniz later designed a stepped drum mechanism that could multiply. These devices were impressive, but they were specialized. The big leap was the idea that one machine could be reconfigured to perform many kinds of calculations. In the 1800s, Charles Babbage proposed the Difference Engine for producing mathematical tables, then imagined something far more general: the Analytical Engine, which would use a “store” for data and a “mill” for processing, concepts that resemble memory and a CPU. Ada Lovelace, working from Babbage’s plans, wrote what many consider the first published algorithm intended for a machine, and she also grasped a radical possibility: a computer could manipulate symbols, not just numbers, hinting at future uses like music and graphics.

Another thread came from industry. Punch cards, first used to control textile looms, showed how instructions could be encoded in a physical medium. Later, Herman Hollerith used punched cards to speed up the 1890 US Census, turning data processing into a business and laying groundwork for what would become IBM. The punch card era lasted surprisingly long; even in the mid-20th century, stacks of cards were a common sight in offices and universities.

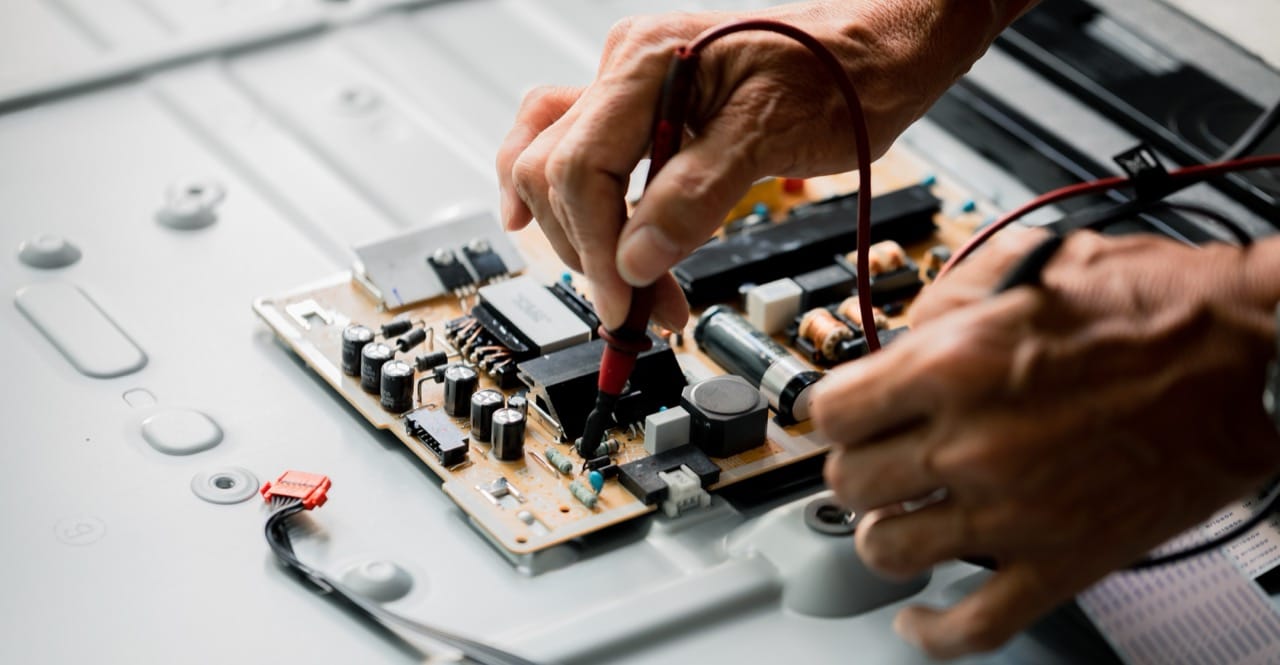

The 1930s and 1940s brought a turning point as electricity replaced purely mechanical motion. During World War II, codebreaking and ballistics demanded faster computation, fueling projects like Colossus in Britain and ENIAC in the United States. Early electronic computers used vacuum tubes, which could switch quickly but generated heat and failed often, helping explain why the machines were enormous and required constant maintenance. Around the same time, a key design principle emerged: the stored-program concept, associated with John von Neumann’s architecture, in which instructions and data live in the same memory. This made computers far more flexible, because changing a program no longer meant rewiring the machine.

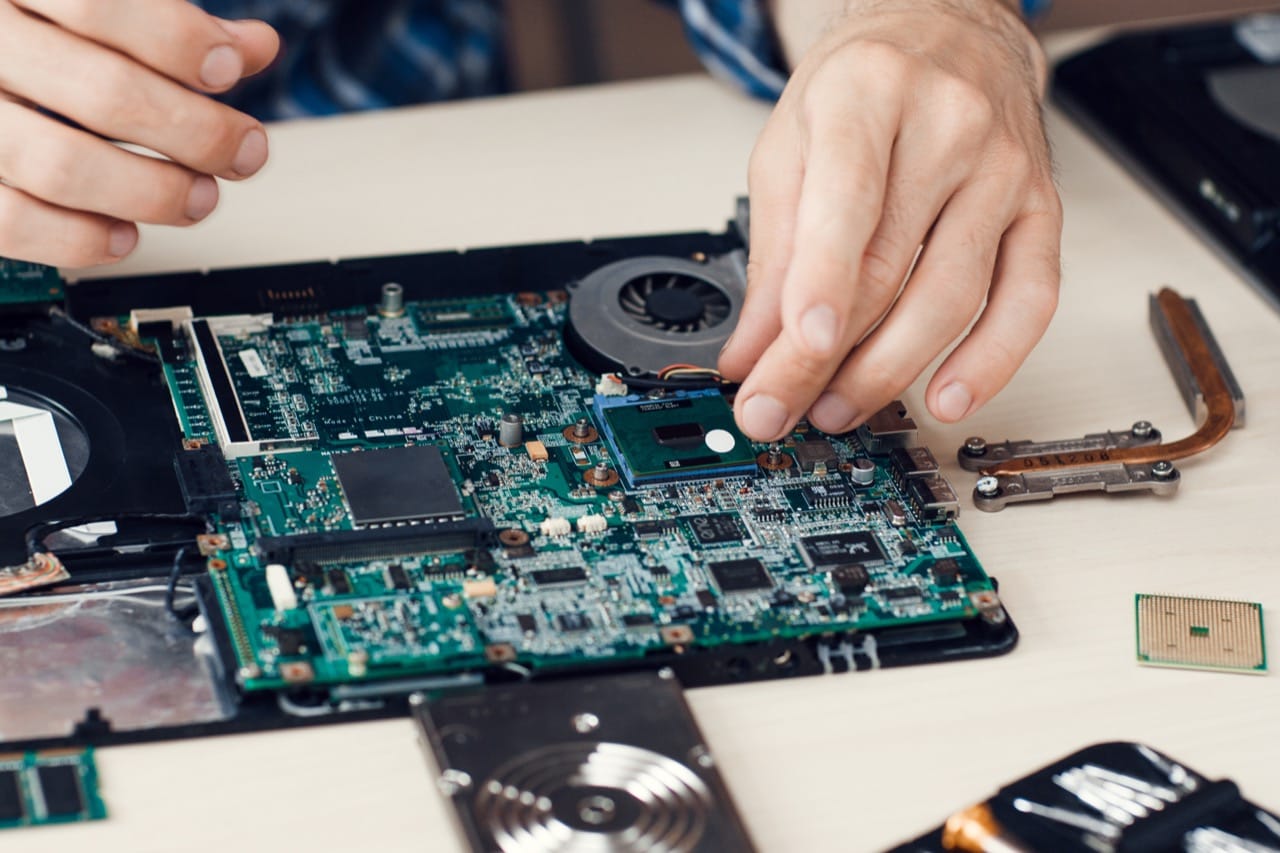

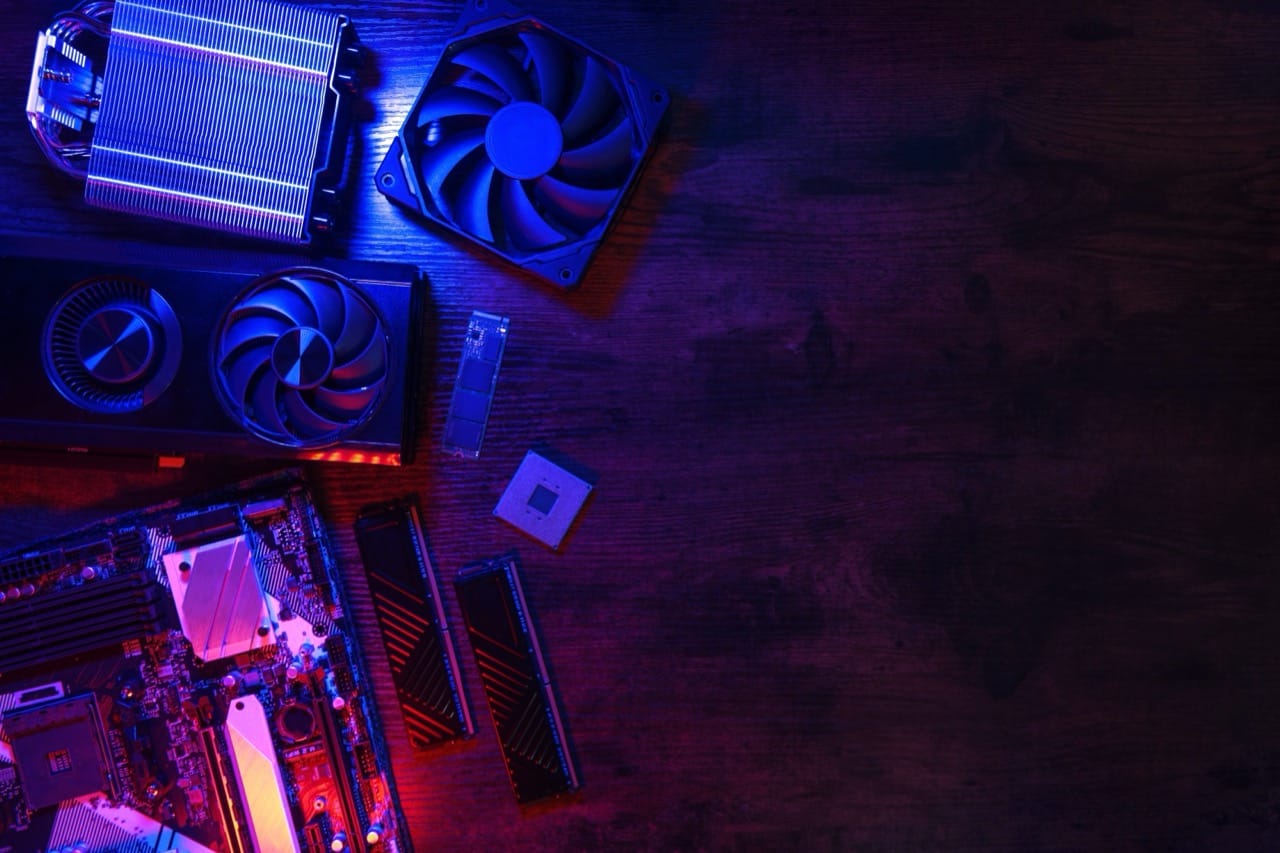

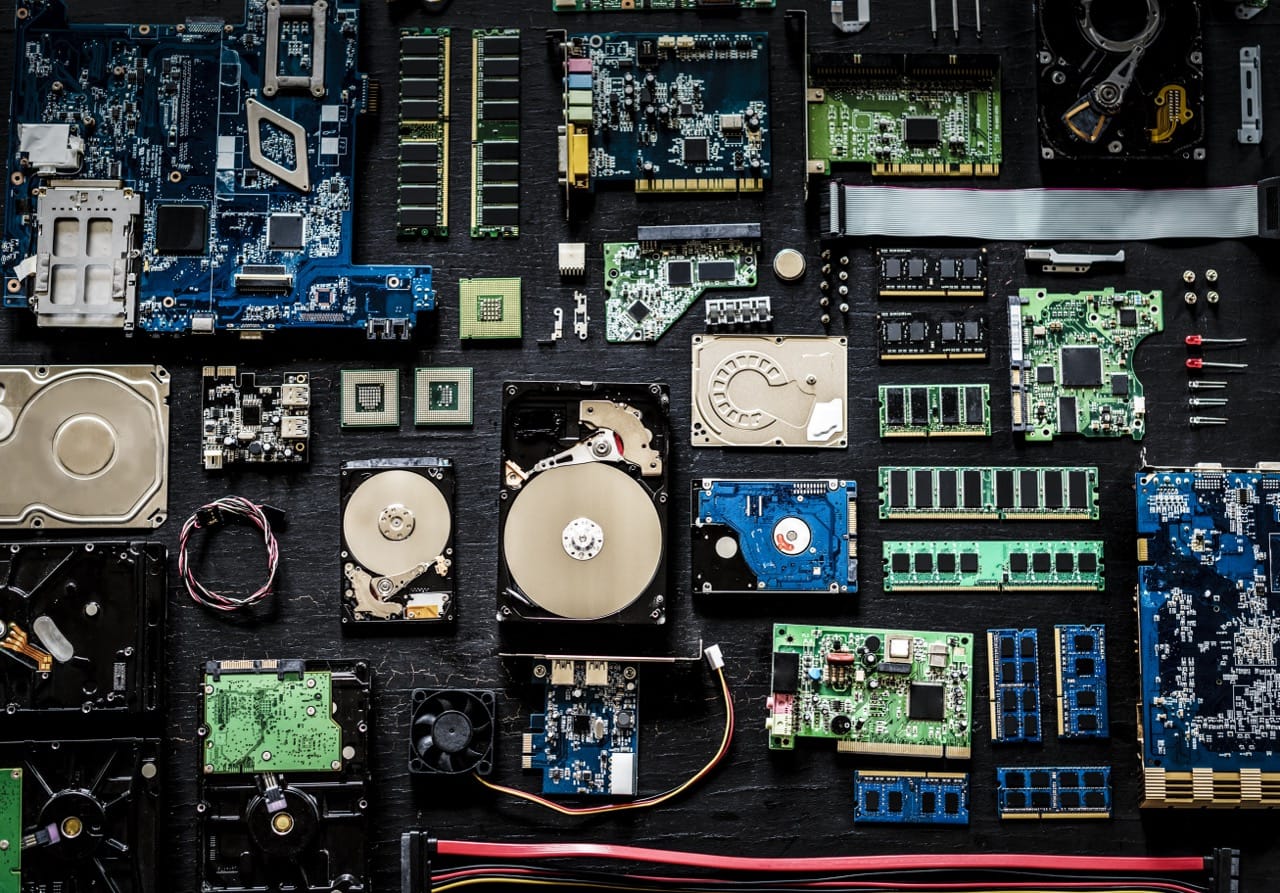

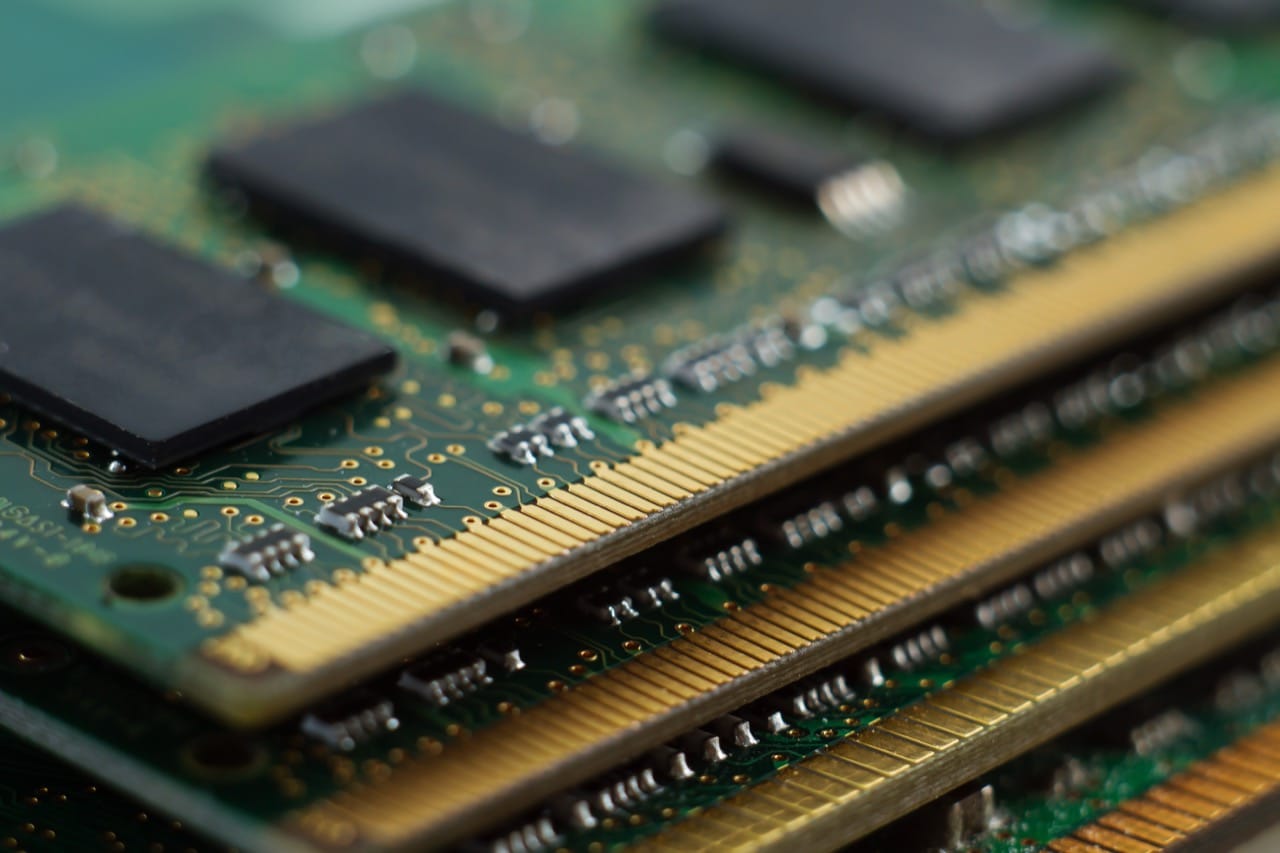

Hardware kept shrinking and improving. The transistor, demonstrated at Bell Labs in 1947, replaced vacuum tubes with smaller, more reliable switches. Then integrated circuits packed multiple components onto a single chip, a breakthrough independently developed in the late 1950s. With each step, computers became less like room-sized experiments and more like products. The microprocessor, introduced in the early 1970s, put a full central processing unit on one chip, making it possible to build affordable personal computers.

The personal computing wave was driven as much by culture as by engineering. Hobbyist kits, early home machines, and the rise of user-friendly software expanded the audience beyond specialists. Graphical interfaces, the mouse, and networking ideas developed in research labs later reached mainstream devices. Today’s phones and laptops still rely on the same core building blocks: binary logic, memory hierarchies, and programmable processors. The app store era can feel worlds away from punch cards, yet the underlying story is continuous: humans keep finding better ways to represent information, write instructions, and build machines that can execute those instructions at breathtaking speed.