Silicon Milestones Computer History Quick Quiz

Quiz Complete!

Silicon Milestones: A Quick Tour of Computer History That Changed Everyday Life

Computer history can feel like a blur of acronyms and old photos, but it is really a story of people trying to solve practical problems faster, more reliably, and at larger scales. Long before electronics, inventors imagined machines that could follow steps. In the 1800s, Charles Babbage designed the Analytical Engine, a mechanical concept that included ideas we now recognize as a processor, memory, and input and output. Ada Lovelace, writing about it, described how such a machine could manipulate symbols, not just numbers, which sounds remarkably like modern computing.

The leap from ideas to working systems accelerated in the 20th century. During World War II, the need for codebreaking and ballistics calculations pushed governments to fund computing research. Machines like Colossus and ENIAC were enormous, power hungry, and difficult to program, but they proved electronic speed could transform what was possible. Around the same time, Alan Turing’s theoretical work clarified what it means for a machine to compute, and his concept of a universal machine helped define the boundaries and potential of computation itself.

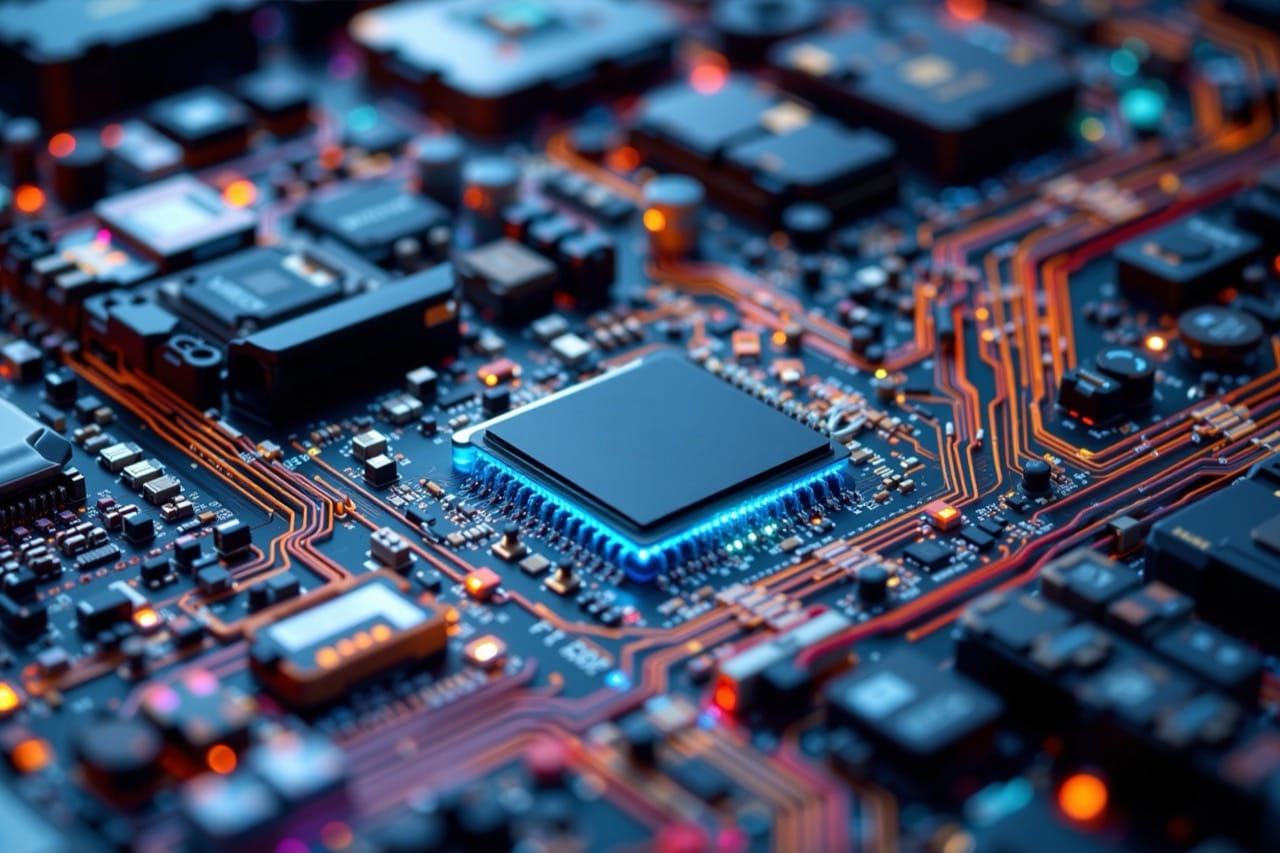

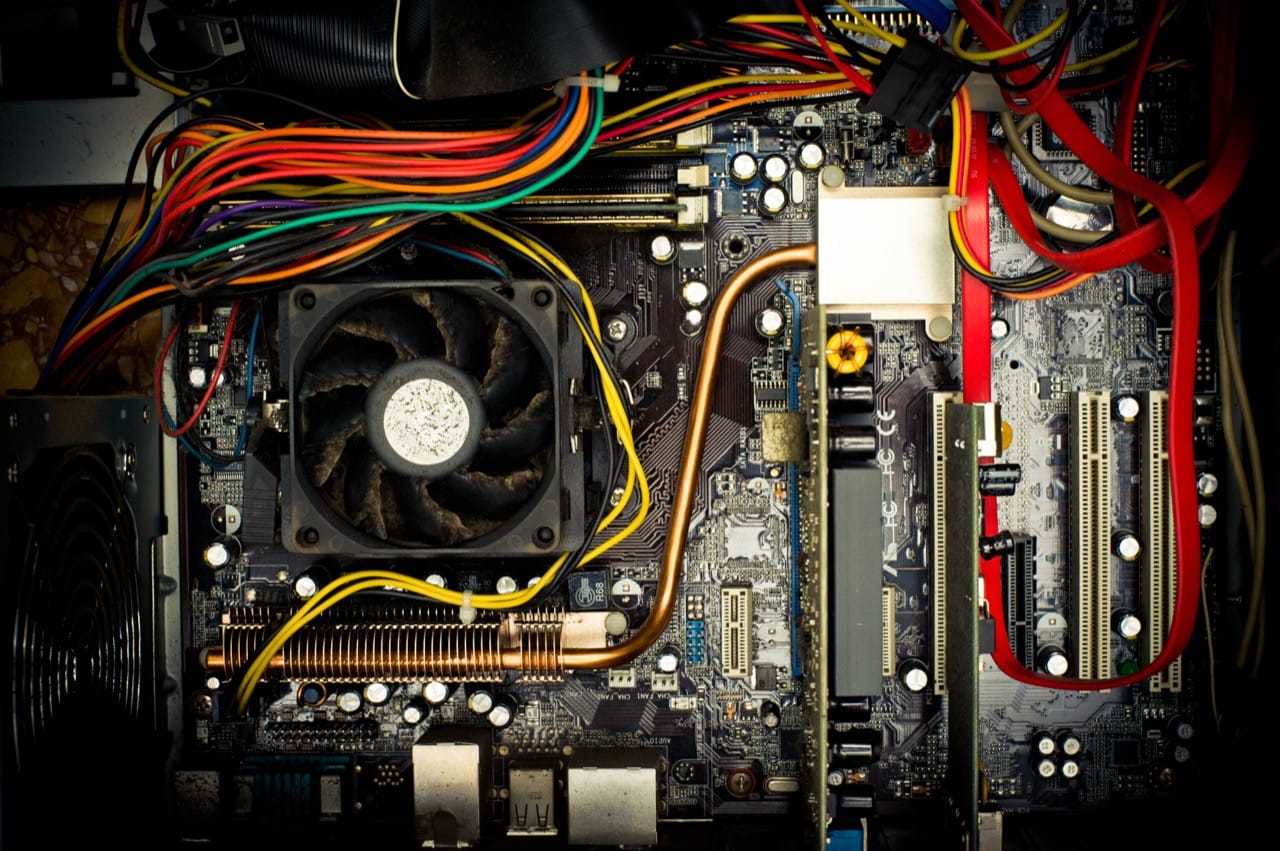

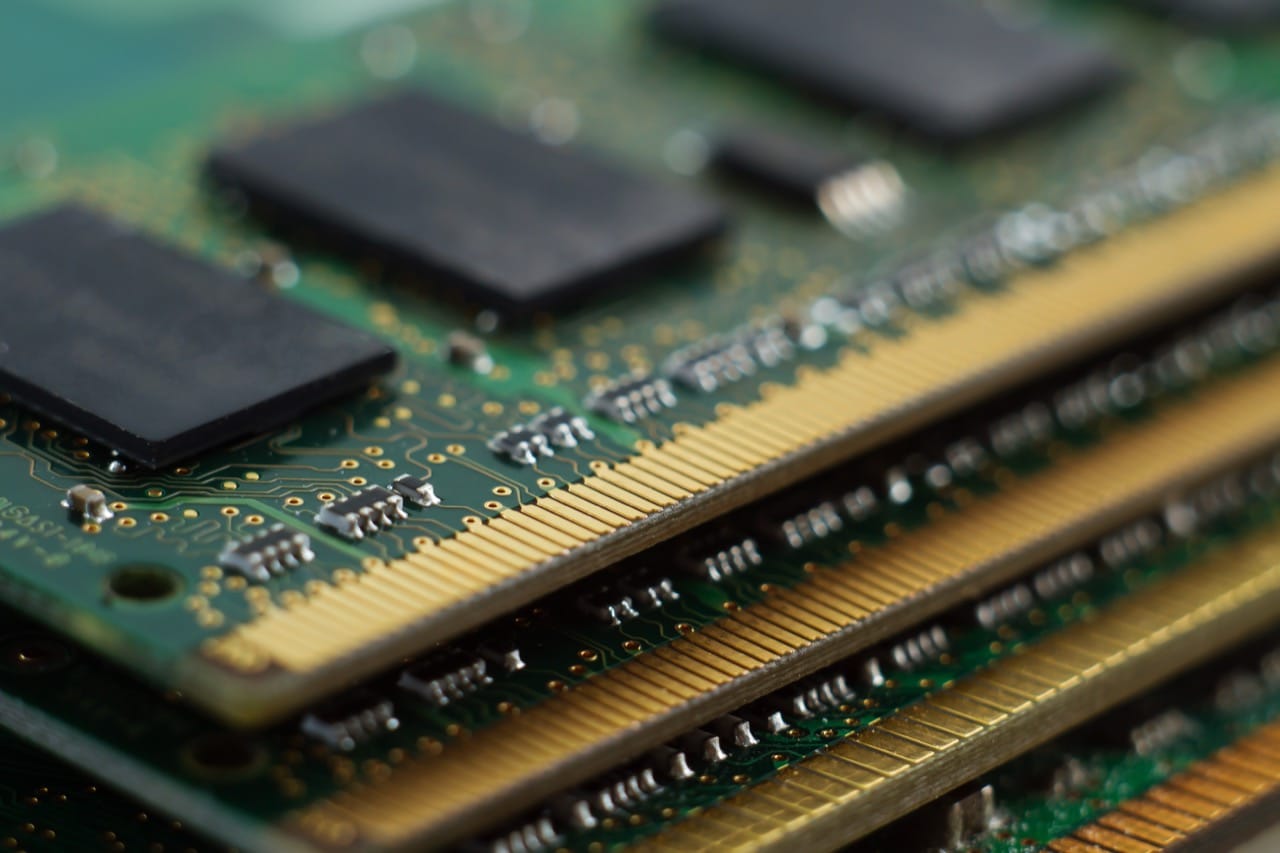

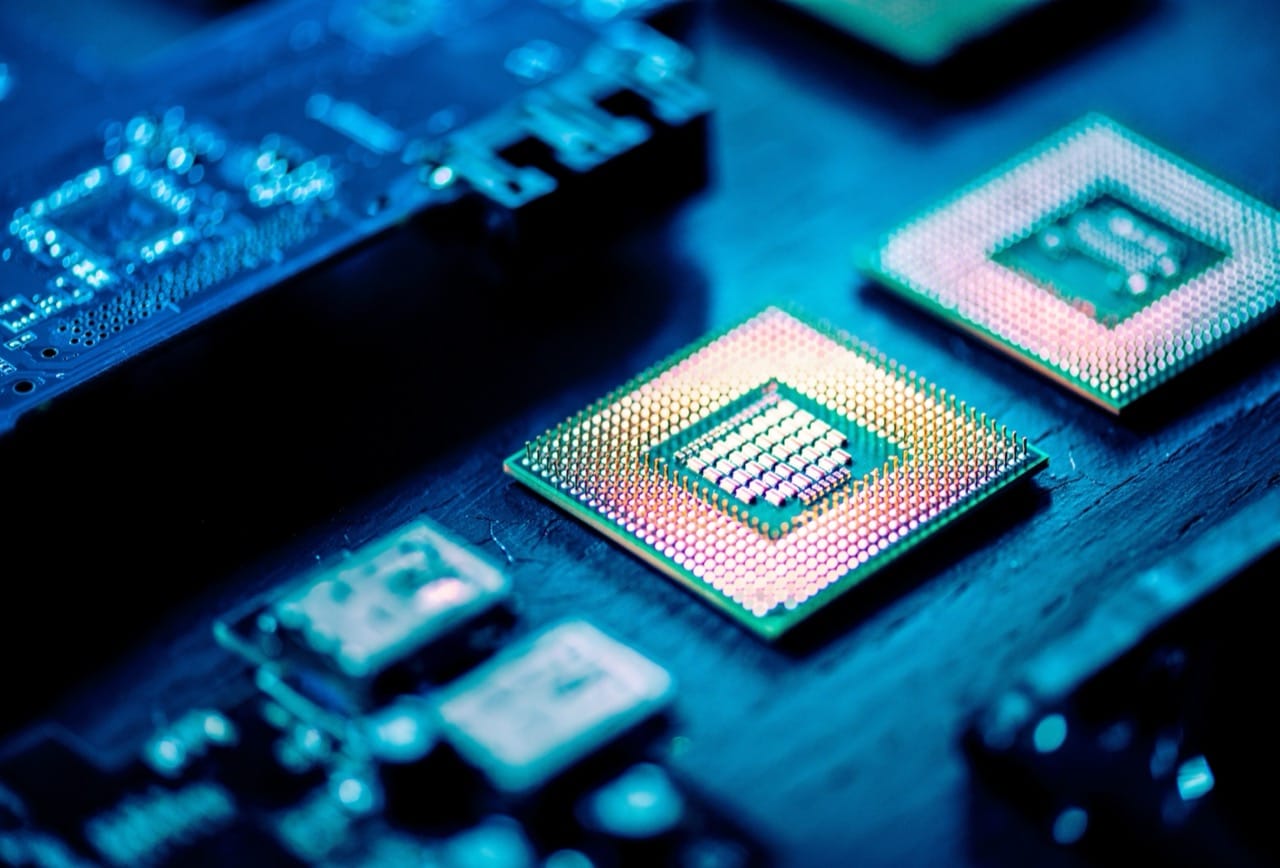

Early computers relied on vacuum tubes, which were fragile and generated heat. The invention of the transistor at Bell Labs in 1947 changed the trajectory of technology. Transistors were smaller, more reliable, and more efficient, paving the way for computers that could leave the laboratory and enter businesses. The next major step was the integrated circuit, which put multiple components on a single chip. This made mass production easier and costs lower, helping computers spread into universities, companies, and eventually homes.

Software evolved alongside hardware. Early programmers often worked directly with machine instructions, but higher level languages made coding more practical and less error prone. FORTRAN helped scientists and engineers, while COBOL became a workhorse for business data processing. Operating systems emerged to manage hardware resources and let multiple programs run more smoothly. UNIX, developed in the late 1960s and early 1970s, became especially influential because it was portable and encouraged a culture of tools that could be combined. Many ideas from UNIX still shape modern systems, from servers to smartphones.

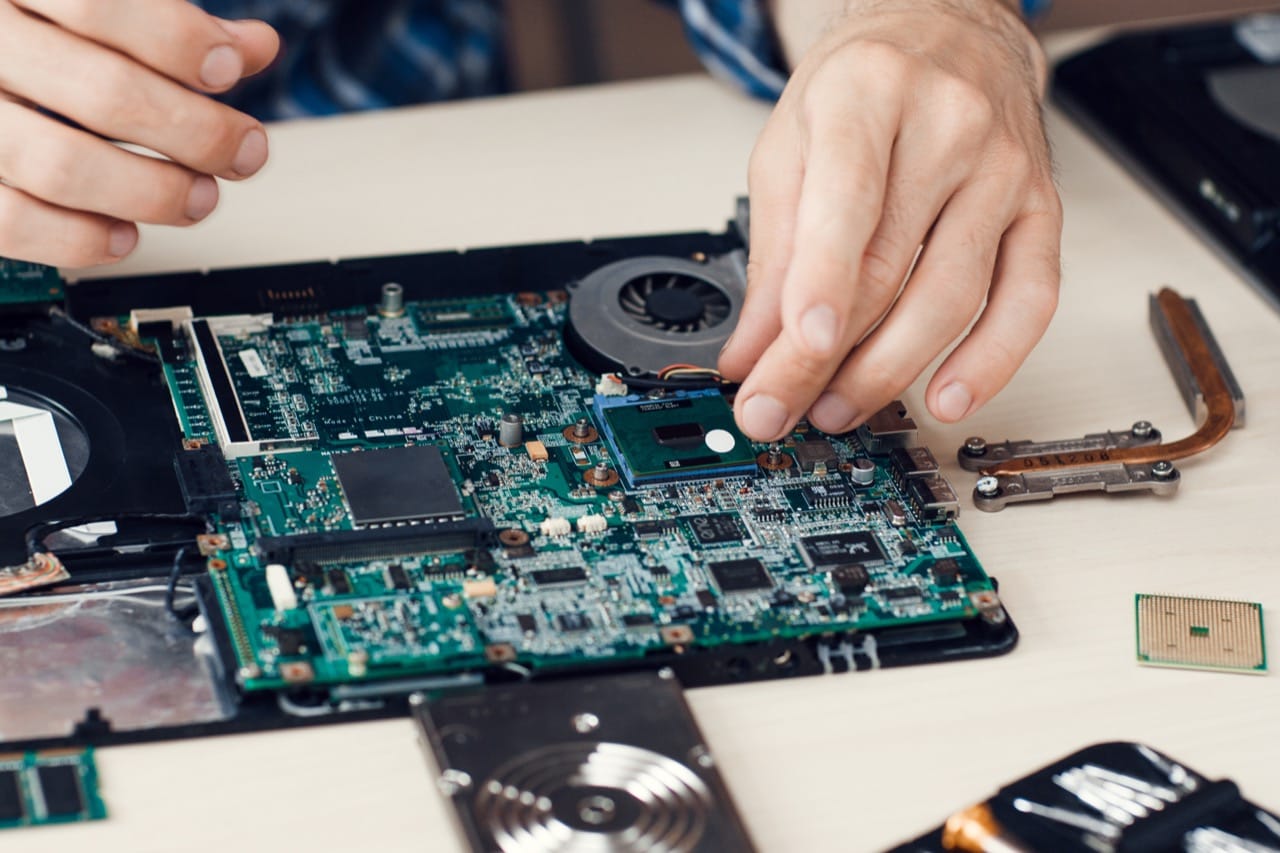

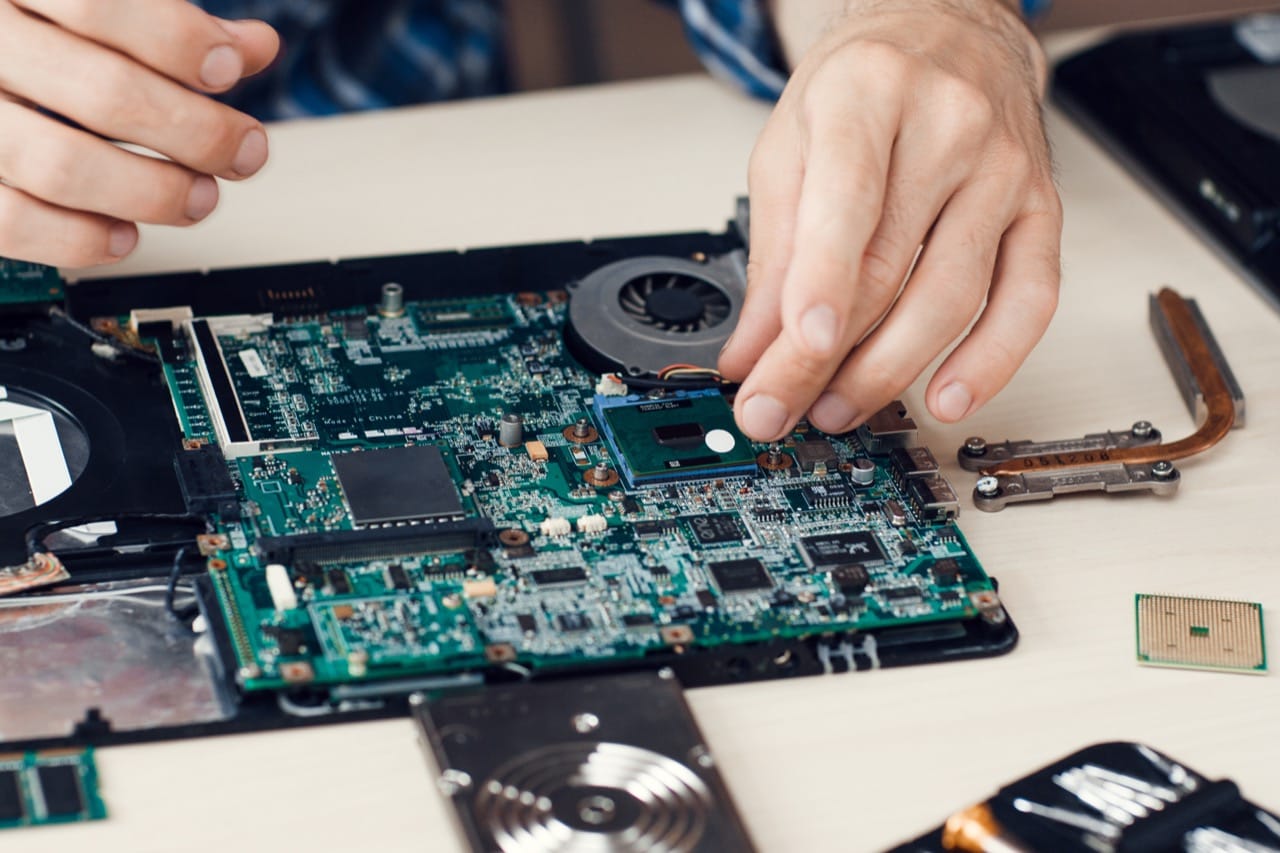

Few milestones feel as dramatic as the microprocessor. When a full CPU could fit on a single chip, the economics of computing changed overnight. Chips like the Intel 4004 and later processors drove the personal computer revolution, enabling machines that individuals could own. Iconic systems from the late 1970s and 1980s brought computing to bedrooms, classrooms, and small offices, and they helped popularize graphical interfaces, the mouse, and desktop publishing. Storage also transformed, moving from punch cards and magnetic tape to floppy disks, hard drives, and eventually solid state memory that can hold entire libraries in your pocket.

Networking tied everything together. ARPANET demonstrated packet switching, a method of breaking data into chunks that can travel different routes and still arrive intact. TCP and IP became the core standards that let many networks interconnect, creating the internet. Later, the World Wide Web made the internet easier to use by linking documents with clickable addresses, helping it explode into mainstream life. From dial up modems to Wi Fi and fiber, faster connections changed what people expect computers to do, from sending email to streaming video.

Today’s devices pack billions of transistors, and advances in chip design, manufacturing, and software allow phones to outperform room sized machines of the past. Understanding the milestones helps explain why certain names and firsts matter: each breakthrough lowered barriers, expanded access, and reshaped how humans store knowledge, automate work, and connect across the world.